For me, anyway, the most surprising thing about Samsung’s disappointing earnings was just how surprised many folks seemed to be. The smartphone market is a massive one, but also rather predictable if you keep just a few key things in mind:

- Everyone will own a smartphone – I don’t think this is controversial, but it’s important, as there are a few implications of this fact that are perhaps non-obvious.

-

The majority of buyers will prioritize price – The implication of a phone being a need and not a want is massive downward pressure on the average selling price for two reasons:

- Low income buyers who might normally not buy consumer electronics or other computing devices will be a part of the phone market, and will buy low-priced models by necessity

- Higher income buyers who are uninterested in other consumer electronics or other computing devices will be a part of the market, and will buy the low-priced models by choice

The net effect is that the average selling price for a phone will be low and always decreasing, while the high-end market will be relatively small in percentage terms.

-

Absolute numbers matter more than percentages – While it’s natural to talk about market size as a percentage, the absolute size is just as important. In the case of Apple, for example, the fact they “only” had 15.5% percent of the market in 2013 is less important for understanding the iPhone’s viability than is the fact they sold 153.4 million iPhones. That is more than enough to support the iOS ecosystem, percentages be damned.

-

There will always be a high end segment – The very reason why everyone will buy a phone (always with you, access to information, communication) are the same reasons there will always be a segment of the population willing to pay for a superior product. The analogy to cars is perhaps overdone, but for good reason: it makes a lot of sense. Like cars, phones are about appearance, performance, and experience; both are status symbols; and (in most parts of the world) both are necessities.

-

The high end isn’t that expensive on an absolute basis – Where the car analogy breaks down is absolute prices. The cheapest Mercedes-Benz you can buy (in the U.S.) is a surprisingly accessible $29,900. That, though, is 46x as expensive as an iPhone 5S. Sure, an iPhone 5S is a bit more than 3x as expensive as a Moto G, but the absolute price difference is only about $500; a car 1/3 the price of that Mercedes would have an absolute price difference of $20,000.

-

Low end quality is improving rapidly – That Moto G is a very nice phone that absolutely does the job for most people. It’s also not that big a deal, particularly in Asia where there are even cheaper and more capable phones available based on a SoC from MediaTek. Moreover, the entire supply chain continues to improve and bring down prices on every part of a smartphone, improving the quality of even the most inexpensive products.

-

Fleshed-out App Stores are table stakes – By fleshed-out app stores, I mean the iOS App Store and Android’s Play Store, full stop. It’s nice that Windows Phone and the Amazon Fire app stores are getting some big names, but the problem with an 80/20 approach (in this case, shooting for 20% of apps that satisfy 80% of needs) is that everyone differs on the remaining 20%, and it’s usually that 20% that is the most important for any one user. Of course, some users don’t really care, but those are likely super low-end customers anyway, which aren’t great for margins or your ecosystem.

-

Carriers matter, at least for the high end – Many customers, particularly in developed markets, are loyal to their carriers and only choose phones which are available on their preferred network. On the flipside, markets in which people move freely between carriers (or use dual-SIMs) are usually lower-income markets with smaller high end segments.

-

Screen size matters – The one physical characteristic that seems to impact phone selection is screen size. While the large screen phones are a relatively low percentage of the total phone market, they are a much higher percentage of the high end.

-

Software Matters – For years analysts treated all computers the same, regardless of operating system, and too many do the same thing for phones. I personally find this absolutely baffling; you cannot do any serious sort of analysis about Apple specifically without appreciating how they use software to differentiate their hardware. The fact is that many people buy iPhones (and Macs) because of the operating system that they run; moreover, that operating system only runs on products made by Apple. Not grokking this fact is at the root of almost all of the Apple-is-doomed narrative (which, by the way, is hardly new).

Software-based differentiation extends to apps. While a fully-fleshed out app store is table-stakes, for the high end buyer app quality matters as well, and here iOS remains far ahead of Android. I suspect this is for three reasons:

- The App Store still monetizes better, especially in non-game categories

- iOS is easier to develop for due to decreased fragmentation

- Most developers and designers with the aptitude to create great apps are more likely to use iOS personally

None of these factors are likely to go away, even as Android catches up with game-based in-app purchases and as iOS increases in screen size complexity.

It is this final point that makes the Samsung news so unsurprising. Samsung had built up a healthy high-end business by:

- Being available on nearly every carrier

- Pioneering the large-screen segment

- Producing hardware that was meaningfully superior to low-end offerings

All three of these factors either have or are in the process of disappearing:

- After a two-year lull, Apple has greatly expanded iPhone availability worldwide

- As noted above, the gap between low and high-end hardware is disappearing

- Multiple manufacturers have moved into the large-screen segment, with the iPhone coming soon

In China Samsung has another problem: their brand and distribution channel, which they have spent billions building, is no match for Xiaomi’s star power which lets the startup sell phones at cost without any additional marketing or channel expenses. It’s not helpful to (rightfully) say this issue is primarily limited to China (I’m more skeptical of Xiaomi’s prospects elsewhere, but bullish on Lenovo) because the Chinese market is the largest market in the world.

Ultimately, though, Samsung’s fundamental problem is that they have no software-based differentiation, which means in the long run all they can do is compete on price. Perhaps they should ask HP or Dell how that goes.

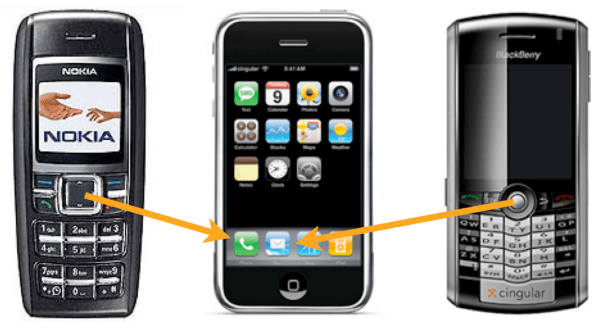

In fact, it turns out that smartphones really are just like PCs: it’s the hardware maker with its own operating system that is dominating profits, while everyone else eats themselves alive to the benefit of their software master.

Previous articles on Samsung’s troubles:

- Two Bears from April 2013

- Two Bears Revisited from February 2014