To understand how Google ended up with a €4.3 billion fine and a 90-day deadline to change its business practices around Android, it is critical to keep one date in mind: July 2005.1 That was when Google acquired a still in-development mobile operating system called Android, and to put the acquisition in context, Steve Jobs was, at least publicly, “not convinced people want to watch movies on a tiny little screen”. He was, of course, referring to the iPod; Apple would go on to release an iPod with video playback a few months later, but the iPhone was still a year-and-a-half away from being revealed.

In other words, Android, at least at the beginning, wasn’t a response to Apple;2 the real target was Microsoft (and to a lesser extent Blackberry), which seemed poised to dominate smartphones just as they had the desktop. That was an untenable situation for Google; then Vice-President of Product Management Sundar Pichai wrote on the Google Public Policy blog about the company’s challenges on PCs:

Google believes that the browser market is still largely uncompetitive, which holds back innovation for users. This is because Internet Explorer is tied to Microsoft’s dominant computer operating system, giving it an unfair advantage over other browsers. Compare this to the mobile market, where Microsoft cannot tie Internet Explorer to a dominant operating system, and its browser therefore has a much lower usage.

What mattered to Google was access to end users: that is what makes the Aggregation flywheel turn. On PCs the company had succeeded through a combination of flat-out being better, the fact that it was very simple to visit a new URL (and make it your homepage), and deals with OEMs to set Google as the homepage from the beginning. All would be more difficult to achieve on mobile, at least mobile as it was understood in 2005: applications were notoriously difficult to find and install, and Microsoft and Blackberry had locked down their operating systems to a much greater extent than Microsoft had on the PC.

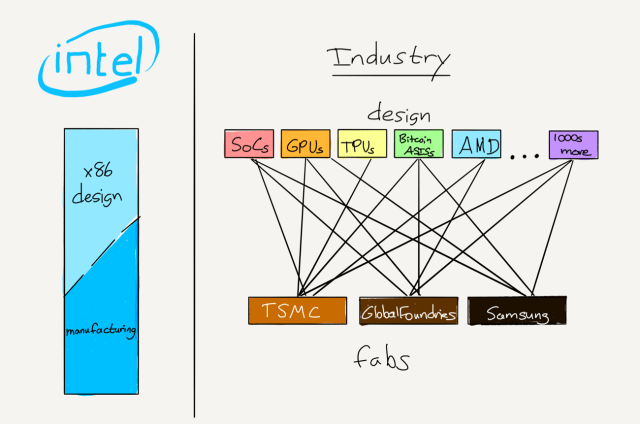

Thus the Android gambit: Google decided to take on Microsoft directly in mobile operating systems, and its most powerful tool would not be the quality of the operating system, but the business model. To that end, while Google did, naturally, retool Android’s user interface once the iPhone was announced, the business model remained Microsoft kryptonite: whereas Microsoft charged a per-device licensing fee, just as it had with Windows, Android would not only be free and open-source, Google would actually share search revenue derived from Android with OEMs that installed the operating system.

Of course Android also ended up being a much better experience than Windows Mobile in the post-iPhone world, and the deal was irresistible to OEMs flailing for a response to the iPhone: get a (somewhat) comparable (sort-of) touch-based operating system for free, and even make money after the initial sale! Indeed, not only did Android effectively kill Microsoft’s mobile efforts, it went on to take over the world via a massive ecosystem of device makers and mobile carriers that competed to drive down costs and increase distribution.

Android’s Success

That Android increases competition was the focus of Pichai’s — now the CEO of Google — latest blog post in response to the ruling:

Today, the European Commission issued a competition decision against Android, and its business model. The decision ignores the fact that Android phones compete with iOS phones, something that 89 percent of respondents to the Commission’s own market survey confirmed. It also misses just how much choice Android provides to thousands of phone makers and mobile network operators who build and sell Android devices; to millions of app developers around the world who have built their businesses with Android; and billions of consumers who can now afford and use cutting-edge Android smartphones. Today, because of Android, there are more than 24,000 devices, at every price point, from more than 1,300 different brands…

What Pichai doesn’t say is that this competition is not so much a feature as it was the point: open-sourcing Android commoditized smartphone development meaning anyone could enter, even as few were in a position to profit over time. That included Google, at least at the beginning, which was by design: remember, the point of Android was not to make money like Windows, it was to stop Windows or any other operating system from getting between Google and users. Venture capitalist Bill Gurley explained in a 2011 post entitled The Freight Train That Is Android:

Android, as well as Chrome and Chrome OS for that matter, are not “products” in the classic business sense. They have no plan to become their own “economic castles.” Rather they are very expensive and very aggressive “moats,” funded by the height and magnitude of Google’s castle [(search advertising)]. Google’s aim is defensive not offensive. They are not trying to make a profit on Android or Chrome. They want to take any layer that lives between themselves and the consumer and make it free (or even less than free). Because these layers are basically software products with no variable costs, this is a very viable defensive strategy. In essence, they are not just building a moat; Google is also scorching the earth for 250 miles around the outside of the castle to ensure no one can approach it. And best I can tell, they are doing a damn good job of it.

Indeed they were, but the strategy had a built-in problem: Android was, well, open source, and just as that helped Android spread, it could just as easily be forked into an initially compatible operating system that didn’t connect to Google’s services — the castle that Google was trying to protect all along. Google needed a wall for its moat, and found one in the Google Play Store.

The Google Play Store and Google Play Services

The Google Play Store, not unlike Android’s user interface, was a response to the iPhone, specifically the highly successful launch of the App Store in 2008. And while the Play Store often lagged the App Store when it came to cutting-edge apps, particularly in the early days, it quickly became one of Google’s most valuable services, both in terms of making Android useful as well as making Google money.

Note, though, that the Play Store is not a part of Android: it has always been closed-source and exclusive to Google’s version of Android, just like other Google services like Gmail, Maps, and YouTube. The problem Google had with all of those apps, though, was that they were updated with the operating system, and OEMs and carriers — who only made money when a device was initially sold — were not particularly incentivized to update the operating system.

Google’s solution was Google Play Services; first released in 2010 as a part of Android 2.2 Froyo, Google Play Services was distributed via the Play Store and provided an easily updatable API layer that would, in the initial version, allow Google to update its own apps independent of operating system updates. It was an elegant solution to a real problem inherent in the free-wheeling model Google had taken for Android distribution: widespread fragmentation. Soon all of Google’s apps were built on top of Google Play Services, and then, in 2012, Google started opening it up to developers.

The initial version was quite modest; here is the announcement on Google+:

At Google I/O we announced a preview of Google Play services, a new development platform for developers who want to integrate Google services into their apps. Today, we’re kicking off the full launch of Google Play services v1.0, which includes Google+ APIs and new OAuth 2.0 functionality. The rollout will cover all users on Android 2.2+ devices running the latest version of the Google Play store.

Over the next several years, though, Google devoted more and more of its effort — and its most interesting APIs, like location and maps and gaming services — to Google Play Services; meanwhile, whatever equivalent service was in the open-source version of Android was effectively frozen in time. The net result is incredibly significant to teasing out this case: Google Play Services silently shifted ever more apps from Android apps to Google Play apps; today, no Google app will function on open-source Android without extensive reworking, and the same applies to ever more 3rd-party apps as well.

That noted, it is hard, in my estimation, to see this as being an antitrust violation. The fact of the matter is that Google was addressing a legitimate problem in the Android ecosystem, and the company didn’t make any developer use Google Play Services APIs instead of the more basic ones still available even today.

The European Commission Case

The European Commission found Google guilty of breaching EU antitrust rules in three ways:

- Illegally tying Google’s search and browser apps to the Google Play Store; to get the Google Play Store and thus a full complement of apps, OEMs have to pre-install Google search and Chrome and make them available within one screen of the home page.

- Illegally paying OEMs to exclusively pre-install Google Search on every Android device they made.

- Illegally barring OEMs that installed Google’s apps from selling any device that ran an Android fork.

Taken in isolation, these seem to run from least problematic to most problematic.

- The Google Play Store has always been an exclusive Google app; it seems that Google ought to be able to distribute it exclusively as part of a bundle if it so chooses.

- Pinning all revenue from Google Search to exclusivity on all devices quite obviously makes it very difficult for alternative search services to build share (as they lack access to pre-installs, one of the most effective channels for customer acquisition); this seems to be more of a Google Search dominance issue than an Android dominance issue though.

- Predicating the availability of any of Google’s apps, including the Google Play Store, on OEMs not taking advantage of the open source nature of Android on devices that will not include Google apps seems much more problematic than Google insisting its apps be distributed in a bundle. The latter is Google’s prerogative; the former is dictating OEM actions just because Google can.

This is where the history of Android matters; before Google Play Services, the primary challenge in building a competitive fork of Android would have been convincing developers to upload their apps to a new app store (since Google would obviously not want to put its apps, including the Play Store, on said fork). That fork, though, never materialized because of Google’s contractual terms barring OEMs from selling any devices built on such a fork.

Today the situation is very different: that contractual limitation could go away tomorrow (or, more accurately, in 90 days), and it wouldn’t really matter because, as I explained above, many apps are no longer Android apps but are rather Google Play apps. To run on an Android fork is by no means impossible, but most would require more rework than simply uploading to a new App Store.

In short, in my estimation the real antitrust issue is Google contractually foreclosing OEMs from selling devices with non-Google versions of Android; the only way to undo that harm in 2018, though, would be to make Google Play Services available to any Android fork.

The Commission’s Remedies

To be sure, that’s not exactly what the European Commission ordered (in fact, “Google Play Services” does not appear a single time in the press release); the Commission seems to feel that the three issues do stand alone. That means that Google has to respond to each individually:

- Google has to untie the Play Store from Search and the Chrome browser

- Google has already stopped paying OEMs for portfolio-wide search exclusivity

- Google can no longer stop OEMs from selling devices with Android forks

The most momentous by far is the first (despite the fact it is the weakest allegation, in my estimation). Samsung, or any other OEM, could in 90 days sell a device with Bing search only and the Google Play Store (where of course Google Search could be downloaded). This will likely accrue to consumers’ benefit: Microsoft, Google, and other providers will soon be bidding to be the default search option, and, given the commoditized nature of Android devices, it is likely that most of what they are willing to pay will go towards lower prices.

Still, it is an unsatisfying remedy: Google built Android for the express purpose of monetizing search, and to be denied that by regulatory edict feels off; Google, though, bears a lot of the blame for going too far with its contracts.

More broadly, the European Commission continues to be a bit too cavalier about denying companies — well, Google, mostly — the right to monetize the products they spend billions of dollars at significant risk to develop; this was my chief objection to last year’s Google Shopping case. In this case I narrowly come down on the Commission’s side almost by accident: I think Google acted illegally by contractually foreclosing Android competitors at a time when it might have made a difference, but I am concerned that the Commission’s publicly released reasoning doesn’t seem to grasp exactly how Android has developed, the choices Google made, and why.

That noted, I highly doubt Google would do anything differently: when it comes to the company’s goals, Android could not be a bigger success — if anything, this ruling is evidence of just how successful the product was.